The gap between a working Jupyter notebook and a production ML system is enormous. We've helped dozens of teams bridge it. Here's what we've learned.

The Prototype Illusion

A data scientist builds a model in a notebook. It achieves 94% accuracy on the test set. Everyone celebrates. Then comes the question: "How do we deploy this?" And that's where the real work begins.

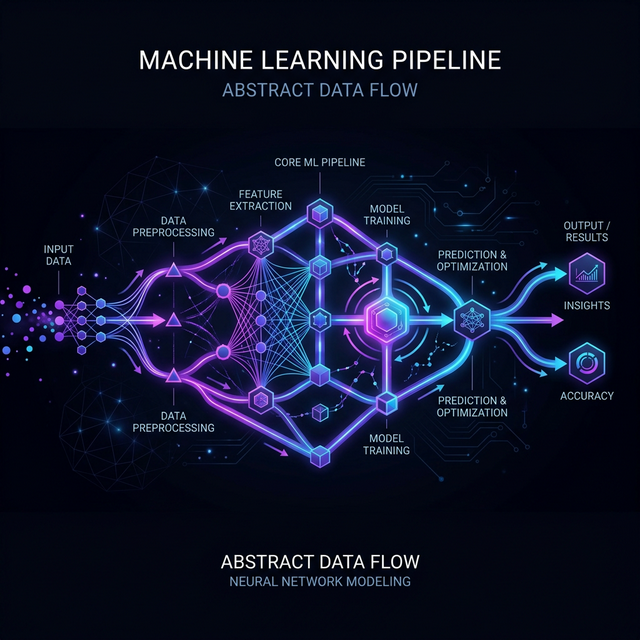

Production ML systems need to handle data drift, model versioning, feature stores, monitoring, rollback strategies, and serving infrastructure. The model itself is often less than 10% of the total system complexity.

The 5 Pillars of Production ML

1. Data Pipeline Reliability

Your model is only as good as its data. Build idempotent, observable pipelines with schema validation at every stage. Prefer batch over streaming unless latency demands otherwise.

2. Feature Engineering at Scale

Feature stores aren't optional — they're the bridge between training and serving. Without them, you'll inevitably have training-serving skew that silently degrades model performance.

3. Model Versioning & Registry

Every model in production should be traceable back to the exact dataset, code, and hyperparameters that created it. Tools like MLflow or Weights & Biases make this tractable.

4. Serving Infrastructure

Choose your serving pattern based on latency requirements:

- Batch scoring — precompute predictions on a schedule. Simple and reliable.

- Real-time API — model behind a REST/gRPC endpoint. Under 100ms latency target.

- Edge deployment — model runs on device. Requires model compression and optimization.

5. Monitoring & Observability

Production models degrade silently. You need to monitor not just system metrics (latency, throughput) but also model metrics (prediction distribution, feature drift, accuracy on labeled subsets).

Lessons from the Field

After deploying systems that serve millions of predictions daily, three lessons stand out:

- Start simple. A well-engineered linear model in production beats a complex ensemble in a notebook.

- Invest in testing. Unit tests for data transformations, integration tests for pipelines, shadow mode for new models.

- Automate retraining. Models that automatically retrain on fresh data outperform those that don't — dramatically.

Need help scaling your ML pipeline?

We've done this dozens of times. Let's talk about your architecture.

Schedule a Consultation